# make hyper-net from the base-net hyper_net = hyper_net_cls( base_net = base_net) # initialization base_net = ResNet18() # base-net # convert conventional functional f_base_net = torch_to_functional_module( module = base_net) It can be summarized in the following pseudo-code: The implementation is designed to follow this generative process, where the hyper-net will generate the base-net. Where θ denotes the parameter of the hyper-net, w is the base-model parameter, and ( x, y) is the data. Recall the nature of the meta-learning as: The operation principle of the implementation can be divided into 3 steps: Step 1: initialize hyper-net and base-net The implementation is mainly in the abstract base class MLBaseClass.py with some auxilliary classes and functions in _utils.py. PLATIPUS is slightly different since the algorithm mixes between training and validation subset, and hence, implemented in a separated file. The main program is specified in main.py. Majority of the implementation is based on the abstract base class MLBaseClass.py, and each of the algorithms is written in a separated class.

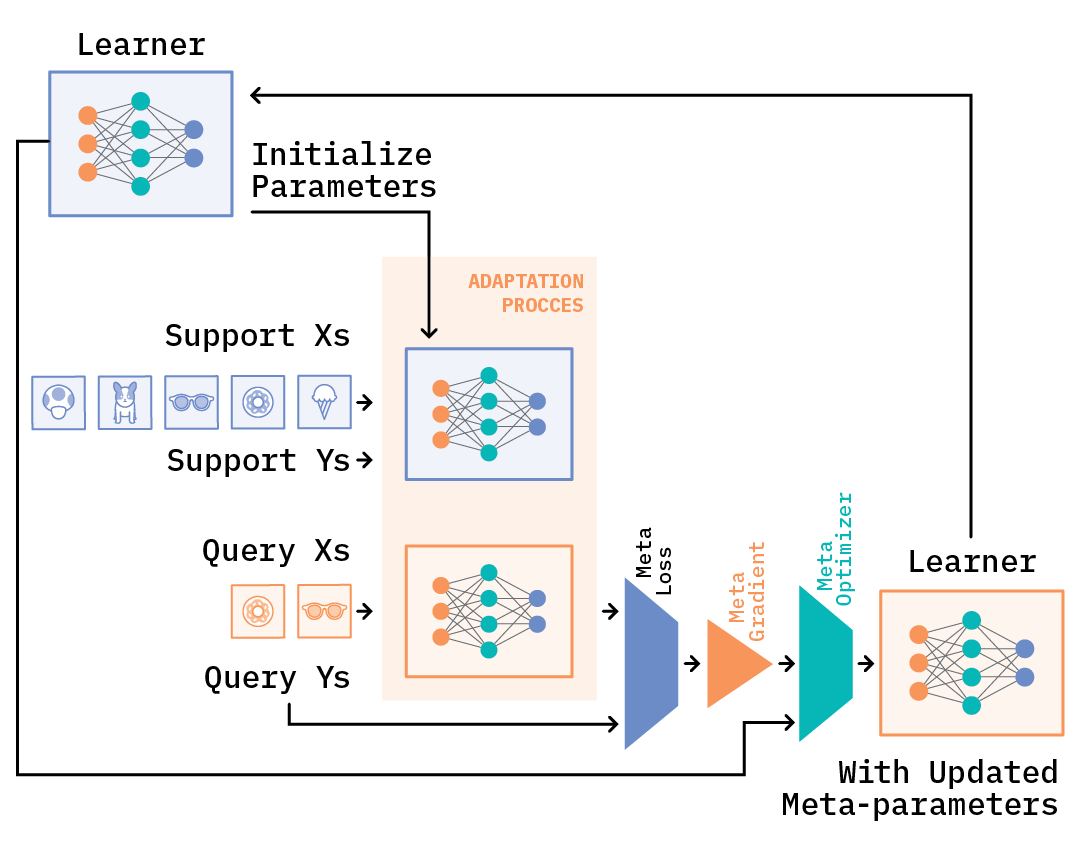

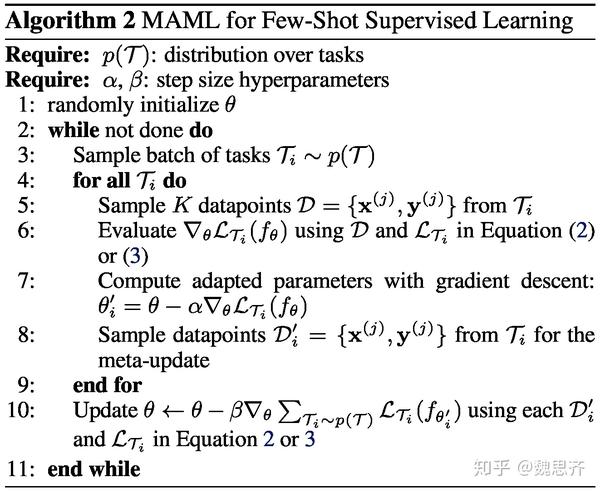

I have created a work-around solution to enable the "first-order" approximation, and controlled this by setting -first-order=True when running the code. A few common models are implemented in CommonModels.py.Īlthough higher provides convenient APIs to track gradients, it does not allow us to use the "first-order" approximate, resulting in more memory and longer training time. Hence, we only need to load or specify the "conventional" model written in PyTorch without manually re-implementing its "functional" form. # forward on different data with new paramter y2 = f_resnet18. # update parameter new_params = update_parameter( params) # forward with functional and handling parameter explicitly y1 = f_resnet18. # convert the network to its functional form f_resnet18 = higher. # get its parameters params = list( resnet18. We demonstrate that this approach leads to state-of-the-art performance on two few-shot image classification benchmarks, produces good results on few-shot regression, and accelerates fine-tuning for policy gradient reinforcement learning with neural network policies.# define a network resnet18 = torchvision. In effect, our method trains the model to be easy to fine-tune. In our approach, the parameters of the model are explicitly trained such that a small number of gradient steps with a small amount of training data from a new task will produce good generalization performance on that task. The goal of meta-learning is to train a model on a variety of learning tasks, such that it can solve new learning tasks using only a small number of training samples. %X We propose an algorithm for meta-learning that is model-agnostic, in the sense that it is compatible with any model trained with gradient descent and applicable to a variety of different learning problems, including classification, regression, and reinforcement learning. %C Proceedings of Machine Learning Research %B Proceedings of the 34th International Conference on Machine Learning %T Model-Agnostic Meta-Learning for Fast Adaptation of Deep Networks We demonstrate that this approach leads to state-of-the-art performance on two few-shot image classification benchmarks, produces good results on few-shot regression, and accelerates fine-tuning for policy gradient reinforcement learning with neural network policies.Ĭite this = We propose an algorithm for meta-learning that is model-agnostic, in the sense that it is compatible with any model trained with gradient descent and applicable to a variety of different learning problems, including classification, regression, and reinforcement learning.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed